Both analyses agree the piece references an official PIB fact‑check and multiple media outlets, which lends it credibility. The critical perspective stresses the use of fear‑laden warnings, repeated identical phrasing and timing that suggest coordinated manipulation, while the supportive perspective highlights the inclusion of contextual background, acknowledgment of dissenting comments and reliance on independent fact‑checkers. Weighing the mixed evidence leads to a moderate assessment of manipulation risk.

Key Points

- The article cites an official government fact‑check and several independent outlets, supporting authenticity (supportive perspective).

- Repeated fear‑appeals and uniform wording across outlets point to coordinated framing (critical perspective).

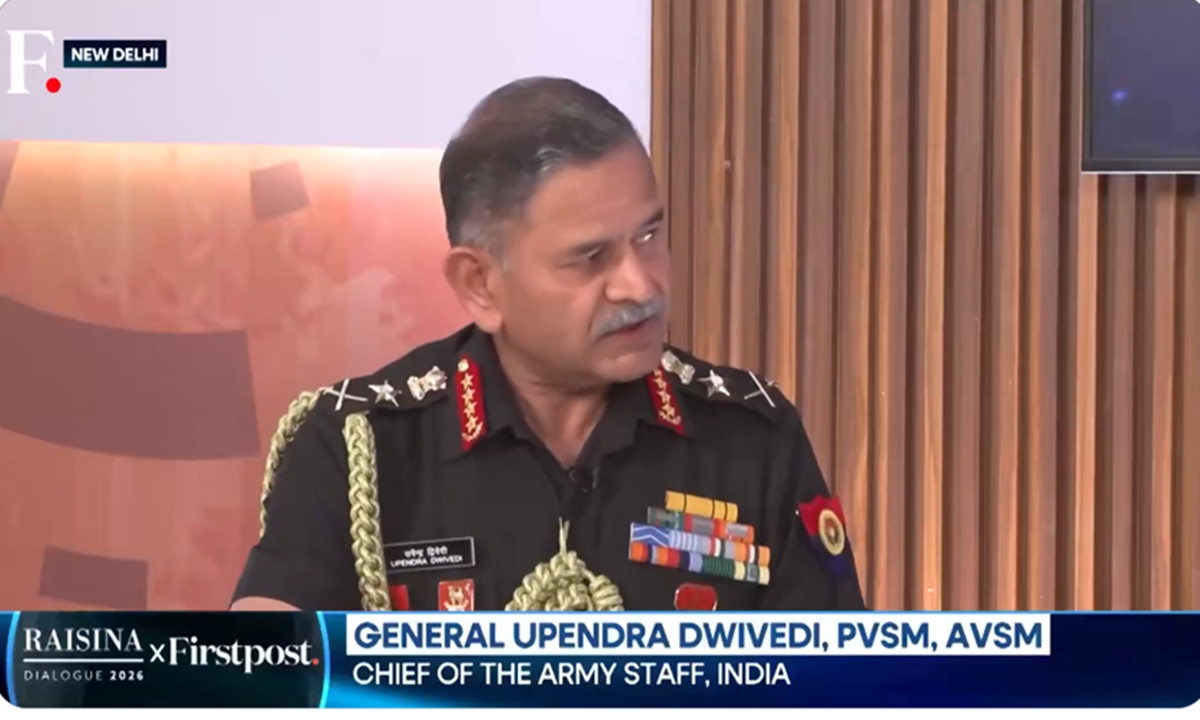

- Context about the original Raisina Dialogue interview is provided, but key details remain omitted, leaving room for selective narrative (both perspectives).

- The timing of the deep‑fake claim coincides with heightened geopolitical tension, which could amplify impact regardless of intent (critical perspective).

- Given the mixed signals, a balanced score reflects moderate manipulation concerns.

Further Investigation

- Obtain and analyze the original Raisina Dialogue footage to verify what was actually said.

- Examine the timestamps and metadata of the viral video posts to assess coordination and timing patterns.

- Review independent technical analyses of the video to confirm or refute deep‑fake characteristics.

The piece uses framing, fear appeals, and coordinated language to steer readers toward viewing Pakistani actors as malicious propagandists and the Indian fact‑check as authoritative, while omitting broader context about the original interview and alternative explanations.

Key Points

- Attribution asymmetry and labeling of Pakistani accounts as "propaganda" creates a us‑vs‑them narrative.

- Repeated warnings like "Beware! This is an AI‑generated deepfake" invoke fear and urgency.

- Uniform phrasing across multiple outlets suggests coordinated messaging rather than independent reporting.

- The article provides limited context about the genuine Raisina Dialogue remarks, leaving key details missing.

- Timing of the deep‑fake claim aligns with heightened geopolitical attention on Iran‑Israel maritime tensions, enhancing impact.

Evidence

- "Pakistani propaganda accounts are sharing a digitally manipulated video"

- "Beware! This is an AI‑generated deepfake video shared to mislead the public"

- "identical phrasing—'Pakistani propaganda accounts are sharing a digitally manipulated video'—appears across PIB Fact Check, The Quint, NDTV"

- "No point debunking after 12 hours, after the whole thing has gone viral"

- "Global Times reporters noted under the PIB Fact Check post, some Indian X users were criticizing the belatedness of such debunking efforts"

The piece displays several hallmarks of legitimate communication: it relies on an official government fact‑check unit, cites multiple independent media outlets, provides contextual background for the original remarks, and acknowledges dissenting user comments without dismissing them.

Key Points

- Uses an official source (PIB Fact Check) that publicly posted the debunking on X

- Cross‑references independent fact‑checkers (The Quint) and original Raisina Dialogue footage

- Offers transparent context about the original interview and notes what was not said

- Encourages readers to verify through official channels rather than demanding immediate action

- Presents criticism of the fact‑check’s timing alongside the correction, showing balanced reporting

Evidence

- "PIB Fact Check described the clip as an AI‑generated deepfake..."

- "Multiple Indian media outlets and fact‑checkers, including The Quint, have traced the source clip to a genuine session at the Raisina Dialogue 2026"

- "The Quint report also highlighted that they did not find any mention about the Iranian ship sinking..."

- "PIB Fact Check urged the public to report such content and verify information through official sources"

- "Mohammed Zubair... wrote under the PIB post that "Govt Fact Check is at least 10 hours late."